Object Localization

Note to students: The best preparation for taking the reading quiz is to pay close attention to the key terms as you read. Each question in the question banks is directly linked to these key terms and phrases.

Chapter Focus Question:

How do humans determine the location of objects within their environment?

- Vision

- Primitive perceptual system

- Structural components of the eye

- Function of optical components

- Achieving focus

- Supportive structures

- Auditory system

- Echoes

- Outer ear

- Auditory localization

- Sound and timing

Section Focus Question:

What are the structures of the perceptual and auditory systems that enable humans to achieve object localization, and how do they function?

Key Terms:

The problem of object localization is the problem of figuring out where objects are located. The precision of the optics in the eyeball provides the first step for the visual system to gain knowledge about where an object is located. The first eyes to evolve could not solve this problem. The first eyes did not look very much like eyes as we know them. They were just a patch of light-sensitive cells. These primitive eyes still conferred advantages to the animal. Any dimming of the light was a signal that the animal should probably move away from its current location to try to avoid being eaten.

Even this primitive perceptual system would have a survival advantage compared with animals who could not sense the dimming and stayed in the same place. As eyes evolved, they became better able to detect not just general dimming but also the location of the light, or lack thereof, in the case of an approaching predator. Increased precision in object localization brought with it a survival advantage by allowing the animal to know where to direct subsequent actions.

The Human Eye

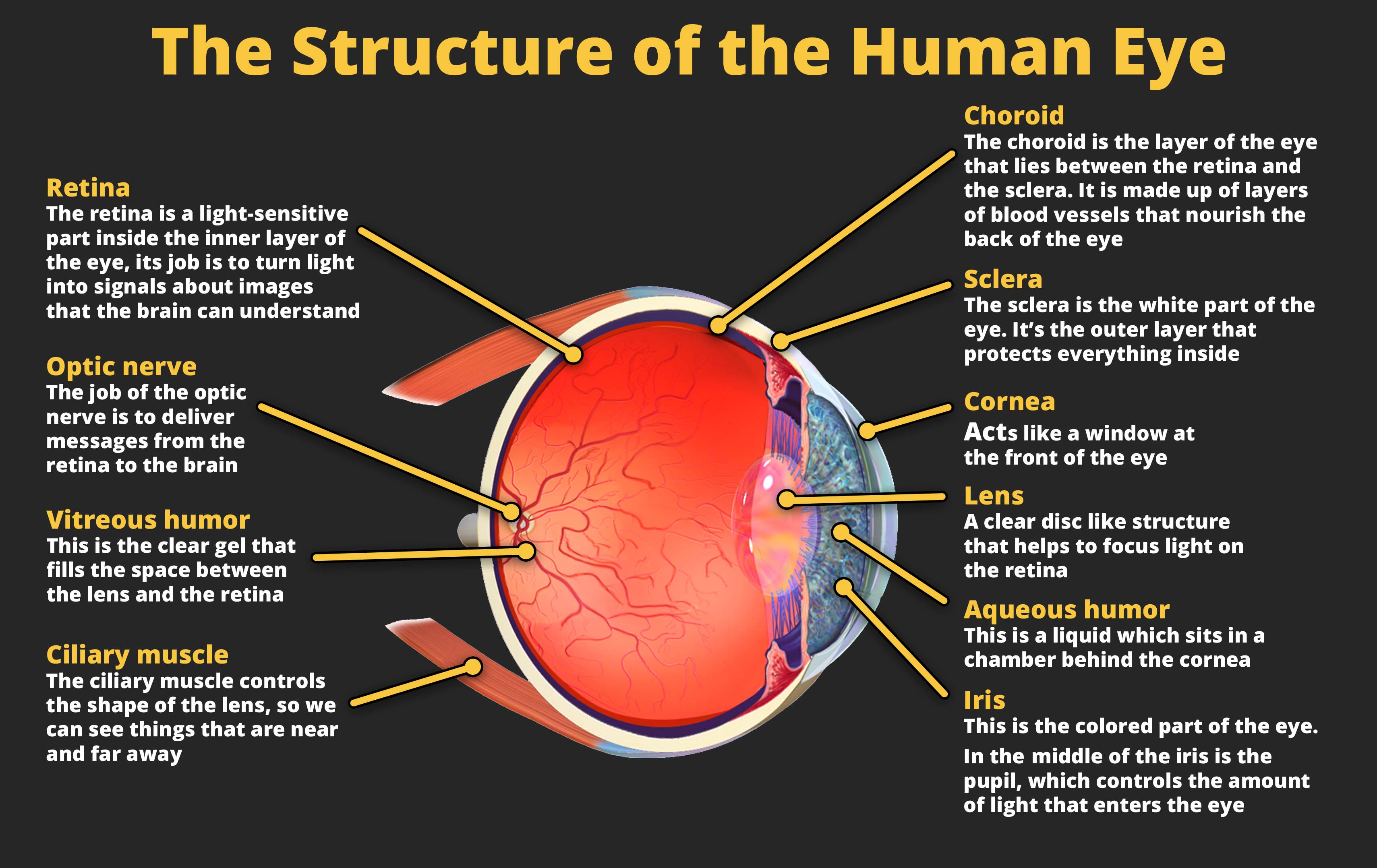

Light is the input for visual perception, and the incoming light is processed by the eyeball. The human eyeball consists of three types of structures: supportive, optical, and neural. The neural structure is the retina. The retina contains photoreceptors, which are cells that fire in response to incoming light. To be able to see clearly, the incoming light must be precisely focused on the photoreceptors. If this focus is not achieved, vision will be blurry. For those of you who wear contact lenses or glasses, you can experience this blur when you are not wearing either.

The function of the four optical components is to focus the light to achieve clarity. The pupil is the opening that allows light to enter the eye. The iris is a muscle that controls the size of the pupil, which is critical for focus. In bright levels of light, focus is achieved by decreasing the size of the pupil. In low light, focus is achieved by increasing the size of the pupil to allow in more light. The other two optical components are lenses. Both are clear membranes. The first lens is the cornea, which is the outer portion of the eye. The cornea is fixed, meaning that it does not change size or shape. The second lens is called the lens and is positioned just behind the pupil. The lens is a flexible lens that grows thicker when looking at nearby objects to better help focus when something is close to the eye. As people grow older, they lose their ability to flex their lenses, which is why many people need reading glasses as they age.

The optical components can only achieve focus if the shape of the eye is maintained. This is where the supportive structures play a role. The supportive structures include the sclera, which is the visible white portion of the eye. The sclera is a dense, tough material that is needed to maintain the shape of the eye because the inside of the eye has a much higher pressure than outside the eye. The aqueous humor and vitreous humor are both clear, jelly-like substances that help maintain eye shape by providing cushioning to the eye. They also provide nutrients to the eye. Eye shape is important for focus. For some people who need contact lenses or glasses, it is because the eyeball is too long or too short. If the shape of the eyeball is not exactly right, the incoming light will not be focused and the image will be blurry.

The eyeball helps to solve the problem of object localization because the location of the light that reflects onto the photoreceptors and causes them to fire corresponds to the direction of the incoming light. If photoreceptors on the right of the eye fire, the object is located to the left. If photoreceptors at the bottom of the eye fire, the object is located above the perceiver. These signals are organized by the direction of their entry into the eye and are sent to the brain based on this organization. As a result, the processes in the brain are able to localize the direction of the object based on how the light entered the eye.

Localization in Audition

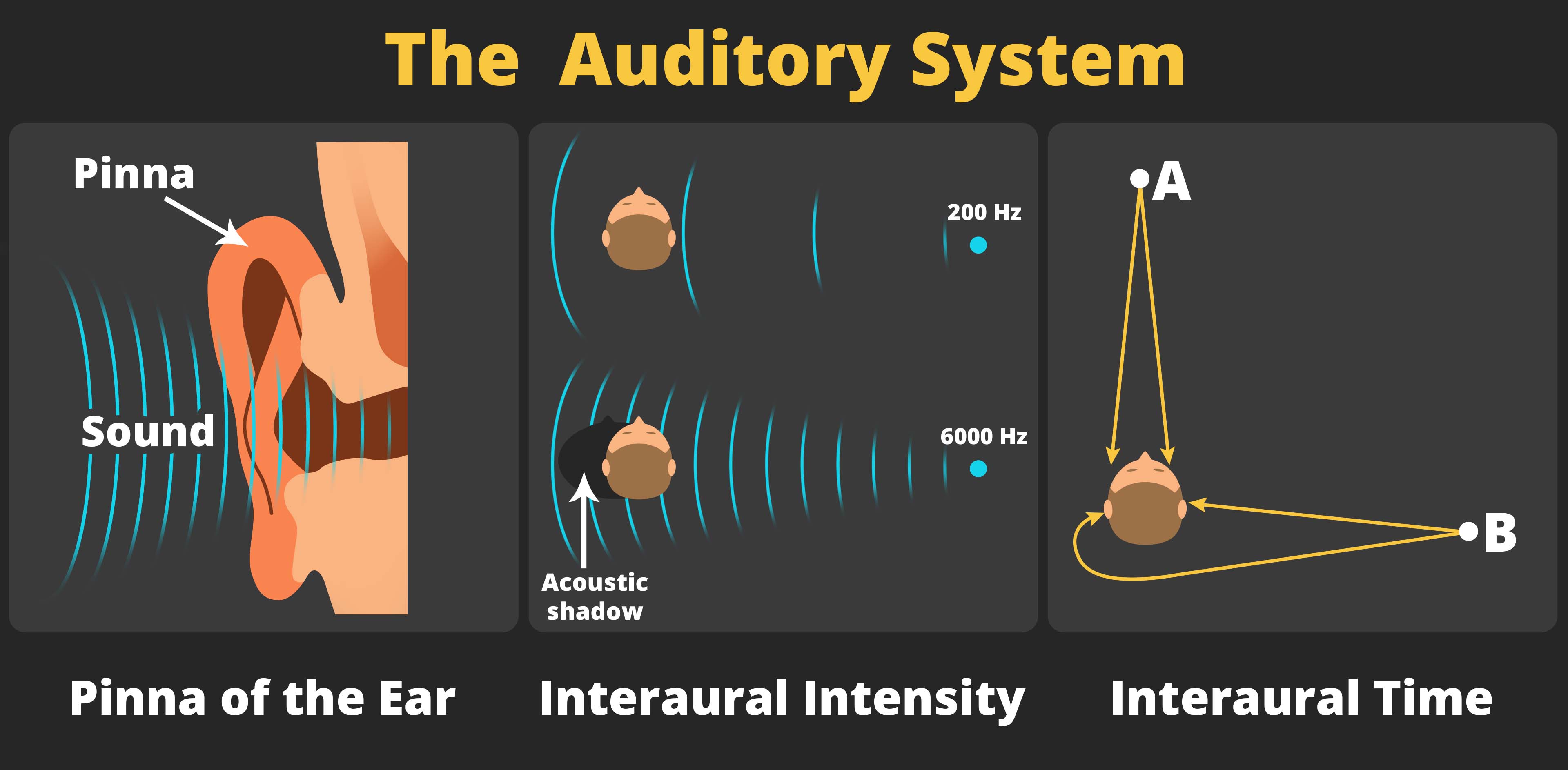

Object localization is also achieved within other sensory systems. In the auditory system, which is responsible for the ability to hear sounds, object localization is primarily achieved through a combination of three processes, all of which are rather remarkable. The first is to use the echoes created by the incoming sound waves bouncing off the folds of the outer ear, known as the pinna. Some animals, like dogs and horses, can move their pinnas to help localize sounds. In humans, the pinna contains several folds, and these folds create different patterns of echoes depending on the direction of the incoming sound. The auditory cortex in the brain can decode the signals created by these echoes in order to localize the source of the sound.

The other two processes involved in auditory localization involve comparing the differences between the two ears. Interaural intensity involves the comparison of the intensity, or loudness, of the sound in each ear. The head dampens some of the sound, so when the sound is louder in the right ear than in the left ear, the auditory system knows the sound is coming from the right. Interaural time involves the comparison of the timing of when the sound reaches each ear. When the sound arrives earlier in the left ear than in the right ear, the brain knows the sound is coming from the left. The difference in the timing of when the sound arrives at each ear is quite small, as little as 600 millionths of a second, but the auditory system is very sensitive to even these small differences in timing. Together, these three cues allow the auditory system to localize sound.

Depth Cues

- Distal world in visual perception

- Proximal image

- Depth perception

- Monocular depth clues

- Occlusion

- Motion parallax

- Depth perception

- Binocular disparity

Section Focus Question:

How does the visual system receive and process clues to allow us to perceive distance?

Key Terms:

All of the cues discussed thus far concern the direction of the object. But to localize the object, the perceptual systems must also determine the distance to an object. To understand how the visual system is able to perceive depth, it is helpful to distinguish between the distal world and the proximal image. The distal world refers to the physical world, which is three-dimensional and contains real objects. The proximal image refers to the patterns of stimulation on the back of the eye, and is also called the retina image. The proximal image is two-dimensional with depth being the lost third dimension. As a result, determining an object’s distance is an incredibly difficult task.

The four depth cues discussed thus far are called monocular pictorial cues. They are called monocular because they are just as effective when one eye is used as when two eyes are used. They are called pictorial cues because they can be demonstrated using pictures or photographs. There are other monocular cues such as the use of motion parallax. Hold your right index finger as far away as you can at eye-level, and hold your left index finger halfway between your face and your right hand. Close one eye and move your head back and forth. You will notice that the position of one finger relative to the other finger moves as you move your head. These patterns of motion can be used to infer the depth of one finger relative to the other.

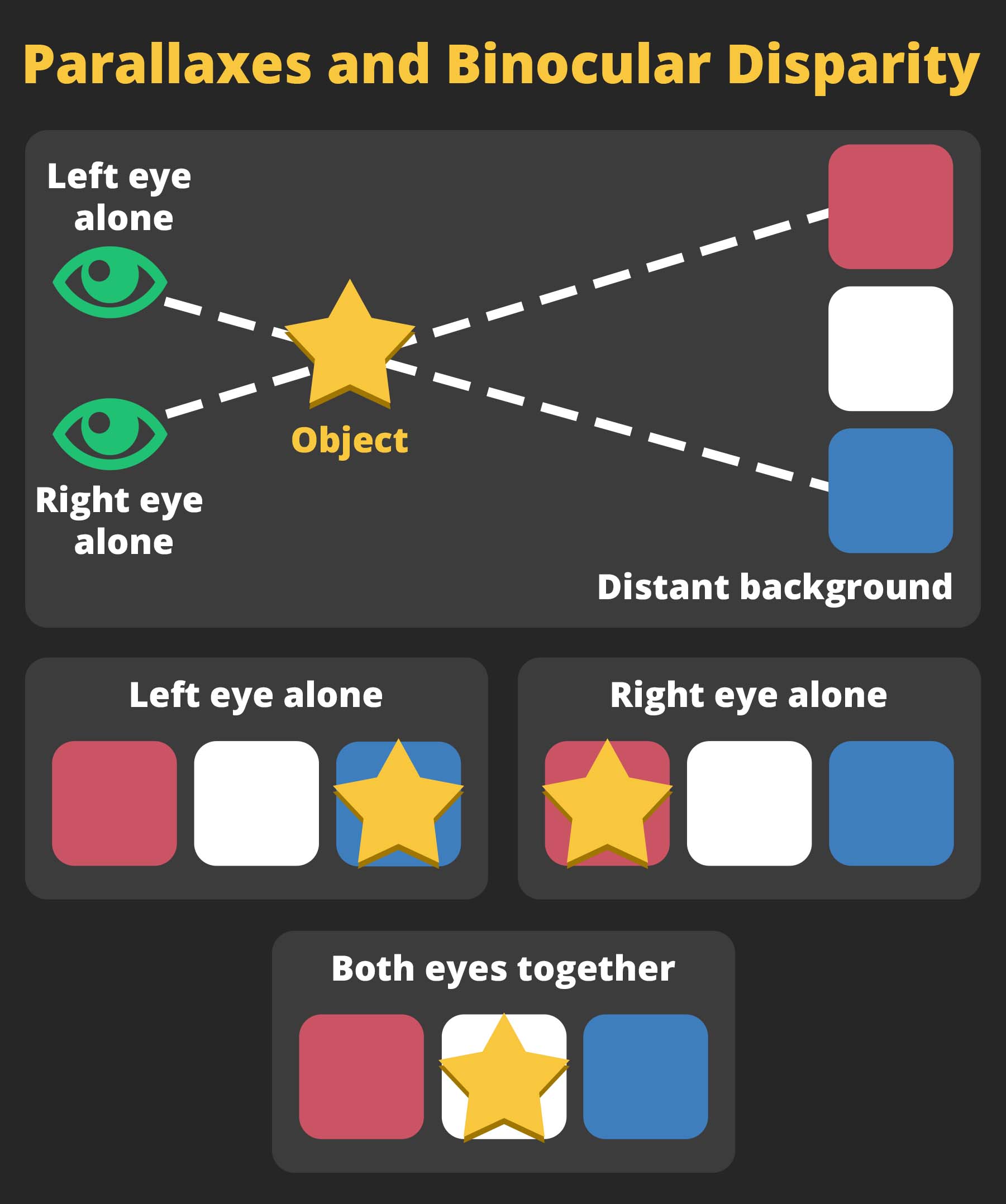

The depth cue of binocular disparity requires two functioning eyes. Binocular means two eyes. Disparity means difference. As the name implies, this depth cue involves using differences between the proximal images of the two eyes. Again, holding up your two index fingers, align your hands so that the closer finger blocks your view of the back finger when looking with only your right eye. Now, without moving your hands, view your hands with your left eye instead. The front finger should no longer block your view and instead should appear to the right of the back finger.

This experience demonstrates that the projections of light into the two eyes give rise to different proximal images. The visual system can use the differences in the proximal image to infer the distances to the objects. However, this cue is only effective for objects that are close to the perceiver, generally within three to five feet. Beyond that, the proximal images do not differ enough between the two eyes for this cue to be useful. Look at an object far away with one eye and then with another eye. You will notice that the view of the scene does not change much as you switch your eyes, which illustrates that the two images are nearly identical. In this case, there are not sufficient binocular disparities to be useful to perceive depth.

Tactile Perception

- Wilder Penfield’s studies on the brain

- Phantom sensations

- Sensory centers in the cortex

- Phantom limb syndrome

- Sound localization

- Ventriloquist effect

- Rolling jumping ball illusion

- Interaural intensity

Section Focus Question:

How does the brain process signals from the tactile system to evaluate sensations from the environment, both real and phantom?

Key Terms:

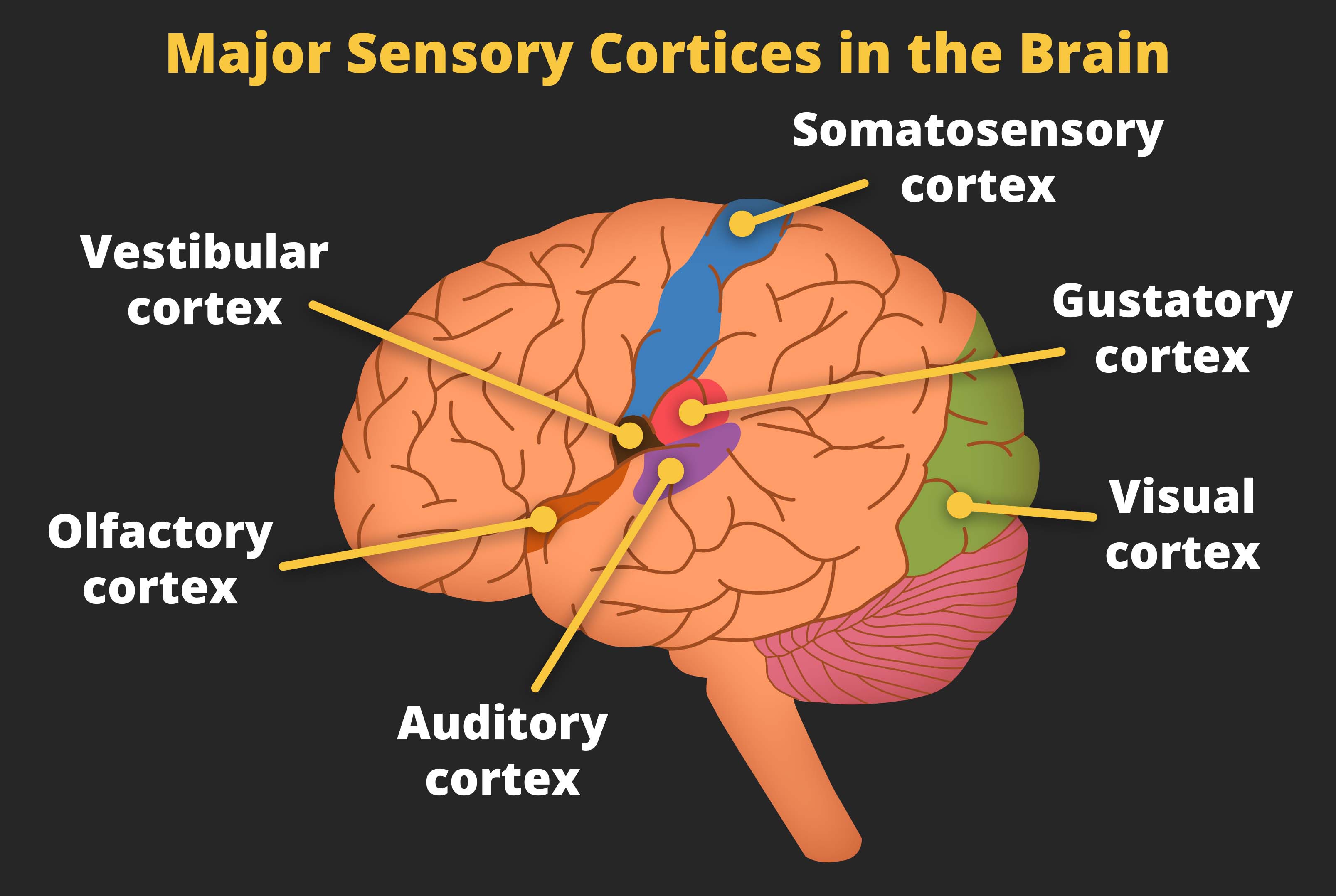

In addition to wanting to know where objects are located in the environment, it is also important to know where objects are located when they come in contact with our bodies. Our skin contains receptors that can detect touch and changes of temperature on the skin. The tactile system in our brain registers the location of touch depending on which cells in the somatosensory cortex fire. The somatosensory cortex is a small strip of brain matter that is organized by body part. If the cells in the hand area fire, a person will feel a touch in the hand. If the cells in the leg area fire, a person will feel a touch in the leg.

Typically, cells in the somatosensory cortex fire because they receive signals from receptors in the skin. A person feels a touch in his or her hand because receptors in the hand registered a change in pressure in the skin and sent the signals to the hand area in the brain. But other ways are possible to stimulate these brain areas and create phantom sensations of touch. The famous neurosurgeon Wilder Penfield used electrodes to stimulate this part of the brain during surgeries. When the hand area was stimulated, the patient reported experience touch sensations in his or her hand even though the hand was not being touched.

When a limb is amputated, the area in the brain that once corresponded to that limb no longer receives signals from receptors in the limb. Over time, signals from nearby areas in the brain begin to leak over, causing the cells to fire. As stated before, when the neurons fire, this gives rise to the sensation of touch even when the skin is not being touched. Thus, many amputees experience a phenomenon known as phantom limb syndrome: they experience touch in the limb that has been amputated.

In some cases, the feeling of phantom touch is unpleasant or even painful. If amputees experience an itch, they cannot scratch it because there is no limb to scratch. If they feel that their hand is balled up into a tight fist, the pressure becomes painful, and they cannot relieve the pain because they cannot unclench a hand that is no longer there. Fortunately, a new and simple solution has recently been created called a mirror box. Patients who experience phantom limb pain can position their intact limb next to a mirror. This creates a visual experience of both the intact limb and the amputated limb as being present. They can use their intact limb to recreate the painful position of the amputated limb, then use their intact limb to create the visual experience of the amputated limb relieving itself. Even though the experience is purely visual, in many cases that is enough to relieve the pain.

Multimodal Localization

The mirror box demonstrates how the various senses can influence each other because vision of the amputated limb can ease feelings of touch in the amputated limb. When one sense influences perception in another sense, this is known as multimodal perception. Multimodal effects reveal that what a person sees is not just influenced by information received by the eye but also by auditory information and tactile information. As another example, the taste a person experiences is influenced by what they smell and see.

When trying to localize a sound, visual cues can influence the perception of the sound. This is known as the ventriloquist effect. A professional ventriloquist cannot literally throw his or her voice. Instead, the ventriloquist uses visual cues to make it seem as if the sound were emitting from the puppet. These visual cues involve moving the puppet’s lips and not moving the performer’s own lips. The visual system senses the presence of movement by the puppet, and the absence of movement by the ventriloquist, and this creates a bias in the auditory system to hear the sound as if it were coming from the puppet.

Another example of localization, or mislocalization, as auditory and visual cues influence each other, is the rolling-jumping ball illusion. In this illusion, a ball moves horizontally across a checkerboard, then changes path for a short while before continuing to roll horizontally. The visual cues are ambiguous as to whether the ball rolled back and forth along the board or whether the ball jumped into the air before returning to the board.

Auditory cues presented simultaneously with the video bias the perception of the ball’s location. When an auditory cue of a ball rolling is presented throughout the video, the perception is that the ball rolled to the back of the board and then rolled to the front of the board. When the auditory cue is of a ball rolling and the sound stops the moment the ball changes its trajectory, the perception is that the ball jumped up into the air before landing and continuing to roll on the checkerboard. These examples show how vision and audition can influence each other when localizing objects.